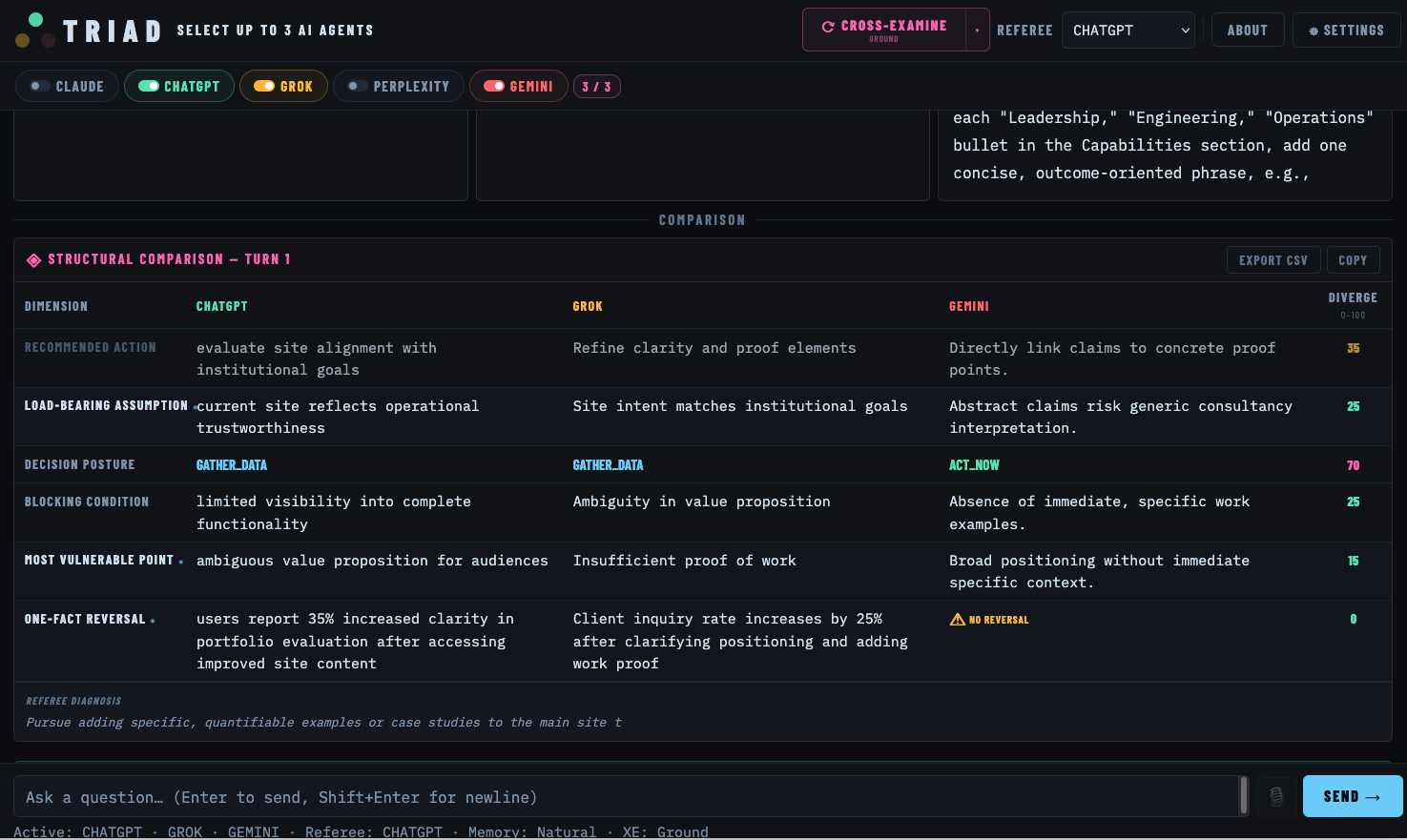

Morgan Harabedian noticed a pattern. Responses from a single AI system were consistently well-formed and confident, but rarely challenged — disagreements that would have sharpened the thinking never surfaced.

He changed his workflow: running the same question across multiple models in parallel, then asking each system to evaluate the other — where they agreed, where they differed, and what assumptions were being made. The result was better decisions, but the process required constant copy/paste and manual moderation to keep the comparison structured.

That workflow became TRIAD.

TRIAD is a multi-agent deliberation system. You ask a question and multiple AI agents respond independently, after which their outputs are structured into a direct comparison. A separate referee model analyzes the differences and identifies what actually matters — whether more analysis is needed, a key fact is missing, or the decision is ready.

If needed, a cross-examination phase sends the agents back in with a specific challenge mode — probing assumptions, testing whether conclusions are actually supported, or pushing toward concrete operational realities. The system re-evaluates how positions change under pressure, then diagnoses what kind of disagreement remains and recommends the most useful next step.

The result is not just multiple answers, but a guided reasoning workflow that surfaces real disagreement, tests it, and shows the user what to do next.

Before: single-model queries with affirming but unchallenged responses. After: structured multi-agent deliberation with visible disagreement and decision-ready synthesis.

triad.scvdata.com →

A single AI gives you confidence. TRIAD gives you calibration.